Scalable I/O and checkpointing for Firedrake

ARCHER2-eCSE01-20

PI: Dr David A Ham (Imperial College London)

Co-I(s): Dr Lawrence Mitchell (Durham University), Dr Vaclav Hapla (ETH Zürich), Dr Matthew G Knepley (University at Buffalo)

Technical staff: Koki Sagiyama (Imperial College London)

Subject Area:

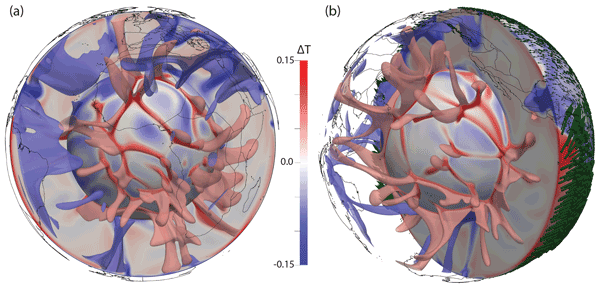

Firedrake [1] is a high-level, high-productivity system for the specification and solution of partial differential equations (PDEs) using the finite element method. The core data structures used to represent solutions of PDEs are the combination of a mesh of the domain of interest and a function belonging to some discrete function space. Although computation is fully and transparently parallel once a mesh is loaded, Firedrake has lacked scalable and flexible I/O checkpointing features for meshes and functions.

In this work we enhanced the capabilities of Firedrake with respect to this weak spot by implementing a scalable and flexible parallel checkpointing infrastructure for meshes and functions using the standard HDF5 parallel file format. A crucial part of this work was the extension of the HDF5 interface of the Portable, Extensible Toolkit for Scientific Computation (PETSc) [2, 3]. Firedrake relies on this interface to manage unstructured mesh data and the association of function space degrees of freedom (DoFs) with mesh topology entities, such as cells, faces, edges, and vertices. In particular, we have enhanced the capabilities of the PETSc interface so that one can save and load function space DoFs in association with mesh topology entities on different numbers of processes. These improvements to PETSc benefit the broader community of scientific software that uses PETSc datastructures, as well as Firedrake itself.

We then adopted this new PETSc implementation in Firedrake, resulting in efficient and flexible checkpointing of meshes and functions. The new interface transparently supports mesh extrusion, which is widely used for thin-domain simulations such as coastal ocean simulations, and the saving of time series solutions of time-dependent problems.

Our new checkpointing feature allows for restarting or post-processing on a number of processes appropriate to that phase of the simulation. It, for instance, benefits those who pause and resume simulations during large-scale time-dependent simulations and those who run simulations on HPC clusters and later post-process the simulation results on their workstation.

[1] Rathgeber F, Ham DA, Mitchell L, Lange M, Luporini F, McRae ATT, et al. Firedrake: automating the finite element method by composing abstractions. ACM Trans Math Softw. 2016; 43:24:1–24:27. http://arxiv.org/abs/1501.01809.

[2] Balay S, Abhyankar S, Adams MF, Brown J, Brune P, Buschelman K, et al. PETSc Users Manual. Argonne National Laboratory; 2021. ANL-95/11 - Revision 3.15.

[3] Balay S, Gropp WD, McInnes LC, Smith BF. Efficient Management of Parallelism in Object Oriented Numerical Software Libraries. In: Arge E, Bruaset AM, Langtangen HP, editors. Modern Software Tools in Scientific Computing. Birkhauser Press; 1997.p. 163–202.

Information about the code

Firedrake is a Python package. It will be indistinguishable from other Python usage in ARCHER2 logs. Firedrake is licensed under LGPLv3, and download instructions are to be found on the project website (https://firedrakeproject.org). For installation scripts and documentation for installation on ARCHER2, see the Firedrake on ARCHER2 github.