- Current System Load - CPU, GPU

- Service Alerts

- Maintenance Sessions

- Previous Service Alerts

- System Status Mailings

- FAQ

- Usage statistics

Current System Load - CPU

The plot below shows the status of nodes on the current ARCHER2 Full System service. A description of each of the status types is provided below the plot.

- alloc: Nodes running user jobs

- idle: Nodes available for user jobs

- resv: Nodes in reservation and not available for standard user jobs

- plnd: Nodes are planned to be used for a future jobs. If pending jobs can fit in the space before the future job is due to start they can run on these nodes (often referred to as backfilling).

- down, drain, maint, drng, comp, boot: Nodes unavailable for user jobs

- mix: Nodes in multiple states

Note: the long running reservation visible in the plot corresponds to the short QoS which is used to support small, short jobs with fast turnaround time.

Current System Load - GPU

- alloc: Nodes running user jobs

- idle: Nodes available for user jobs

- resv: Nodes in reservation and not available for standard user jobs

- plnd: Nodes are planned to be used for a future jobs. If pending jobs can fit in the space before the future job is due to start they can run on these nodes (often referred to as backfilling).

- down, drain, maint, drng, comp, boot: Nodes unavailable for user jobs

- mix: Nodes in multiple states

Service Alerts

The ARCHER2 documentation also covers some Known Issues which users may encounter when using the system.

| Status | Type | Start | End | Scope | User Impact | Reason |

|---|---|---|---|---|---|---|

| Ongoing | Service Alert | 2026-05-19 10:50 | 2026-05-19 16:00 | ARCHER2 GPU nodes | Software available on the GPU nodes has changed | Update of GPU system software |

Maintenance Sessions

This section lists recent and upcoming maintenance sessions. A full list of past maintenance sessions is available.

No scheduled or recent maintenance sessions

Previous Service Alerts

This section lists the five most recent resolved service alerts from the past 30 days. A full list of historical resolved service alerts is available.

| Status | Type | Start | End | Scope | User Impact | Reason |

|---|---|---|---|---|---|---|

| Resolved | Service Alert | 2026-05-01 13:20 | 2026-05-01 14:05 | Cooling system | New work has been prevented from starting to reduce load on the cooling system | Issue with cooling infrastructure |

| Resolved | Service Alert | 2026-04-30 15:50 | 2026-04-30 18:00 | Cooling system | New work has been prevented from starting to reduce load on the cooling system | Issue with cooling infrastructure |

| Resolved | Service Alert | 2026-04-23 11:10 | 2026-04-23 12:10 | ARCHER2 network | University network issue has let to loss of DNS server and network connectivity. Login to ARCHER2 may fail. Access to SAFE may fail. | University-wide University of Edinburgh network issue. This is being worked on as a high priority. Update - this now appears to be resolved. |

| Resolved | Service Alert | 2026-04-23 08:30 | 2026-04-23 11:30 | ARCHER2 compute | Very low probability risk of need to pause starting work due to lower resilience in computer cooling loop | Essential maintenance on cooling loop pump |

| Resolved | Service Alert | 2026-04-17 14:00 | 2026-04-27 17:00 | ARCHER2 NVMe /scratch filesystem | There may be some brief interruptions in access to the NVMe /scratch filesystem | The NVMe filesystem experienced an issue. Our team have restored it but they may need to perform some failover actions to bring everything back to normal. |

System Status mailings

If you would like to receive email notifications about system issues and outages, please subscribe to the System Status Notifications mailing list via SAFE

FAQ

Usage statistics

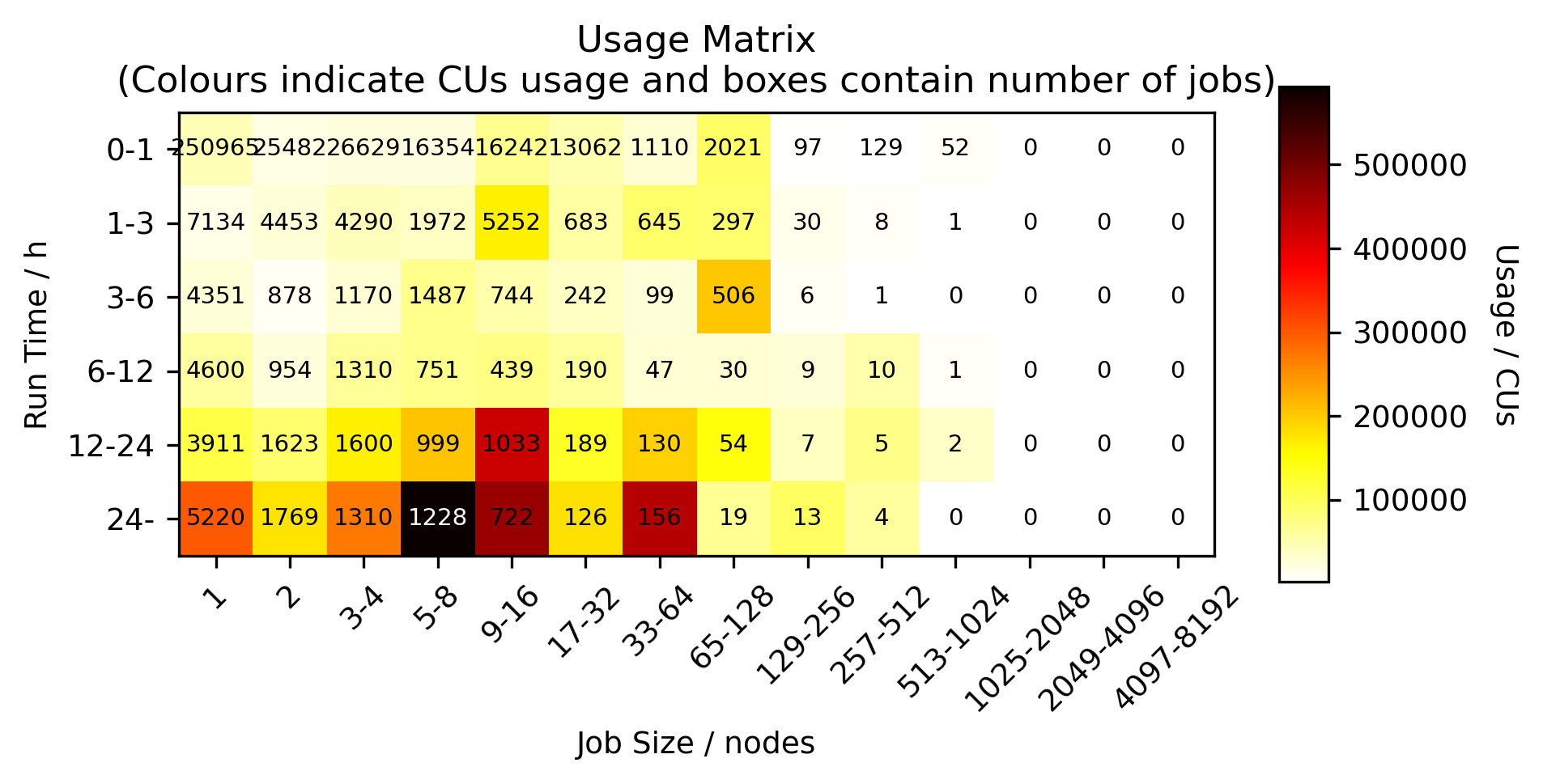

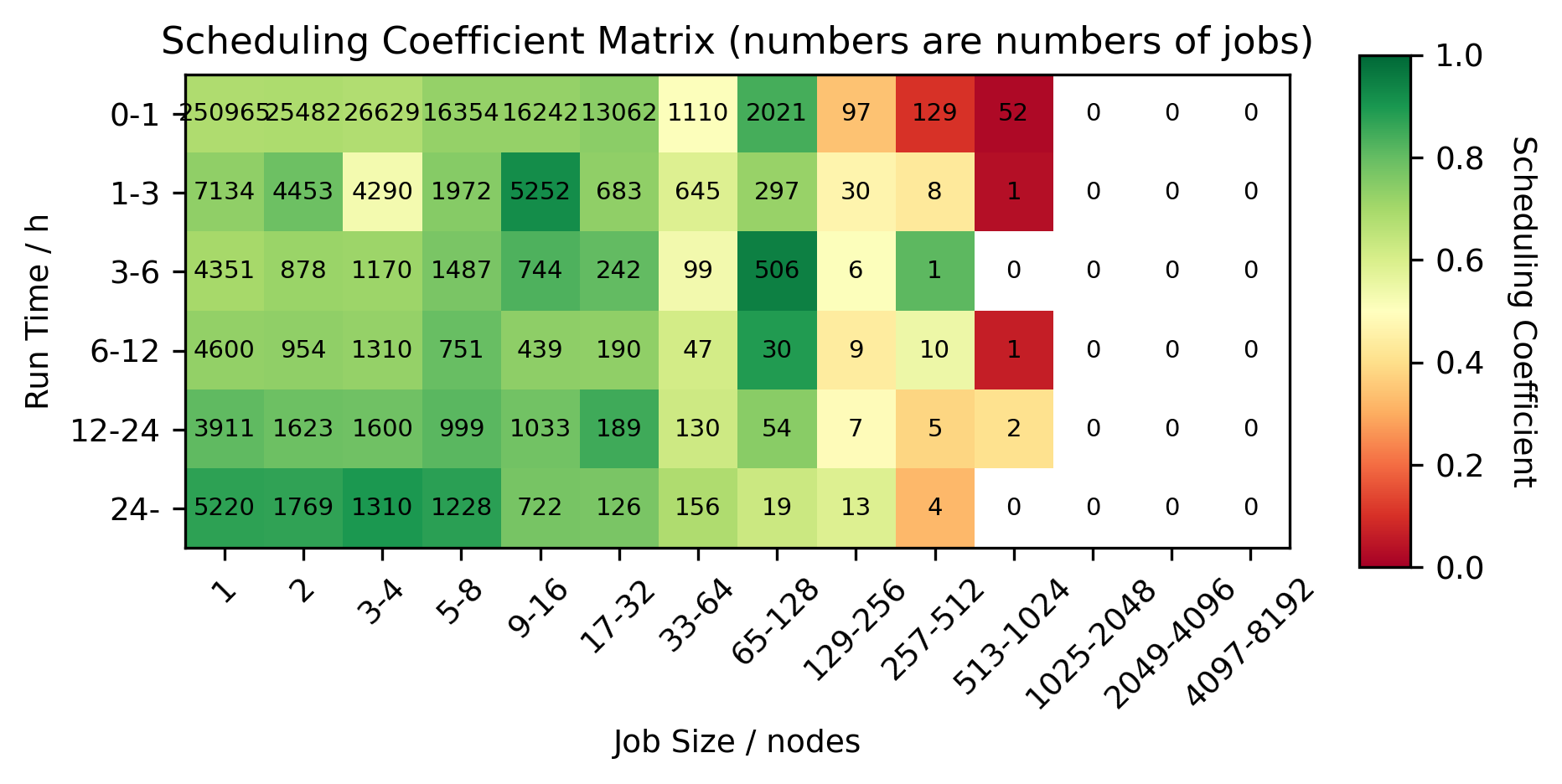

This section contains data on ARCHER2 usage for Apr 2026. Access to historical usage data is available at the end of the section.

Usage by job size and length

Queue length data

The colour indicates scheduling coefficient which is computed as [run time] divided by [run time + queue time]. A scheduling coefficient of 1 indicates that there was zero time queuing, a scheduling coefficient of 0.5 means that the job spent as long queuing as it did running.

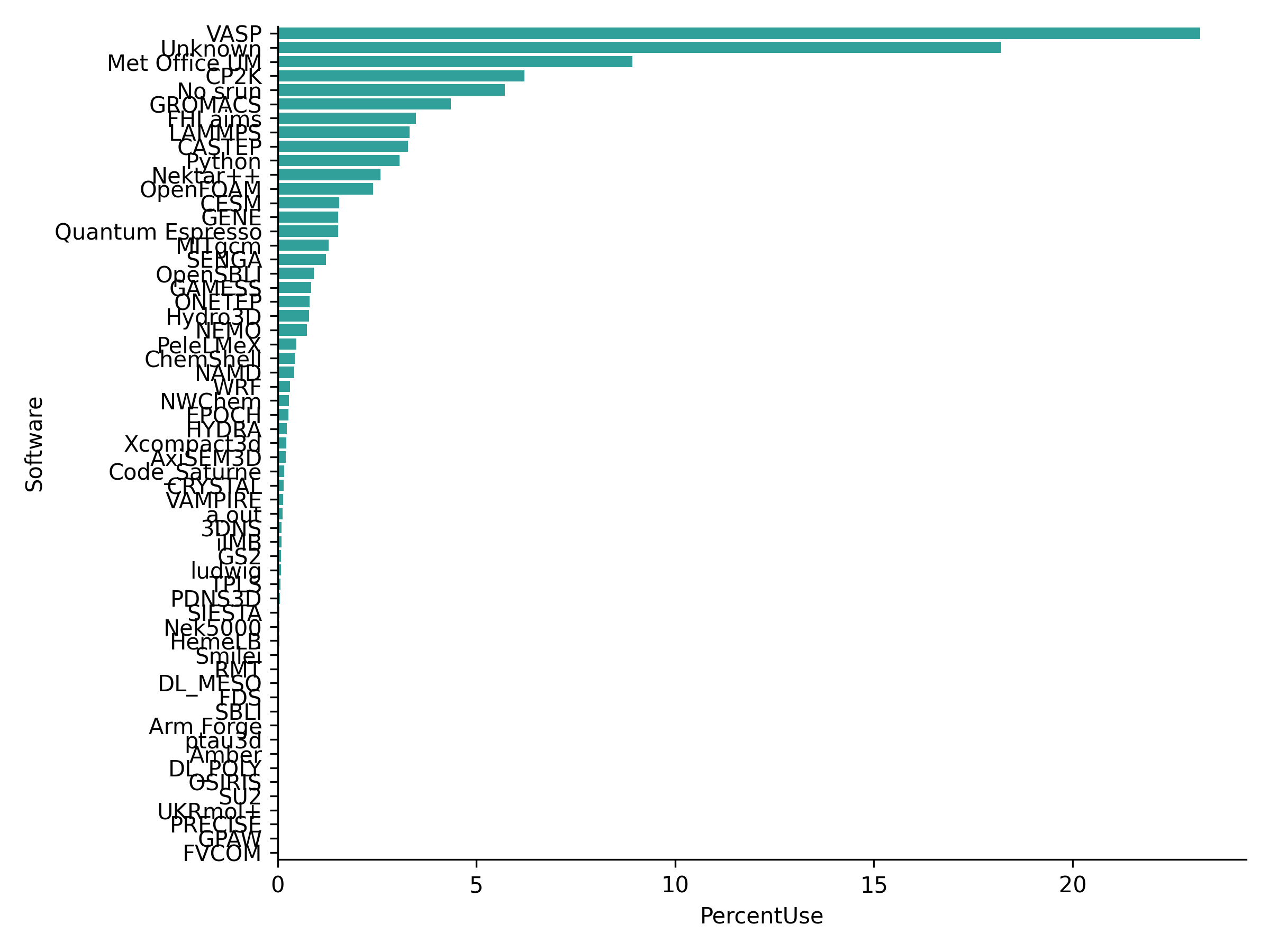

Software usage data

Plot and table of % use and job step size statistics for different software on ARCHER2 for Apr 2026. This data is also available as a CSV file.

This table shows job step size statistics in cores weighted by usage, total number of job steps and percent usage broken down by different software for Apr 2026.

| Software | Min | Q1 | Median | Q3 | Max | Jobs | Nodeh | PercentUse | Users | Projects |

|---|---|---|---|---|---|---|---|---|---|---|

| Overall | 0 | 480.0 | 896.0 | 2048.0 | 170368 | 4811220 | 3971027.7 | 100.0 | 1000 | 115 |

| VASP | 1 | 384.0 | 512.0 | 1024.0 | 16384 | 460179 | 921517.6 | 23.2 | 158 | 15 |

| Unknown | 1 | 900.0 | 1536.0 | 3200.0 | 131072 | 1119292 | 722630.3 | 18.2 | 510 | 87 |

| Met Office UM | 4 | 576.0 | 1152.0 | 6165.0 | 6400 | 63762 | 354611.0 | 8.9 | 48 | 2 |

| CP2K | 1 | 512.0 | 512.0 | 1024.0 | 9216 | 118509 | 246322.5 | 6.2 | 35 | 7 |

| No srun | 0 | 128.0 | 2048.0 | 8192.0 | 170368 | 196453 | 227064.7 | 5.7 | 724 | 95 |

| GROMACS | 1 | 512.0 | 768.0 | 1024.0 | 2048 | 29701 | 173250.5 | 4.4 | 38 | 4 |

| FHI aims | 1 | 512.0 | 512.0 | 1024.0 | 8192 | 54695 | 138215.3 | 3.5 | 29 | 6 |

| LAMMPS | 1 | 128.0 | 256.0 | 768.0 | 131072 | 85655 | 131985.3 | 3.3 | 56 | 17 |

| CASTEP | 1 | 336.0 | 400.0 | 400.0 | 2560 | 346323 | 130112.4 | 3.3 | 31 | 4 |

| Python | 1 | 1.0 | 512.0 | 1536.0 | 8192 | 1108291 | 121603.6 | 3.1 | 73 | 29 |

| Nektar++ | 1 | 5120.0 | 5120.0 | 5376.0 | 18432 | 419 | 102449.7 | 2.6 | 6 | 2 |

| OpenFOAM | 1 | 504.0 | 1024.0 | 1024.0 | 20480 | 61882 | 95156.5 | 2.4 | 55 | 19 |

| CESM | 1 | 512.0 | 1024.0 | 1792.0 | 5888 | 1556 | 61548.5 | 1.5 | 13 | 3 |

| GENE | 4 | 10240.0 | 10240.0 | 10240.0 | 16384 | 400 | 60642.1 | 1.5 | 5 | 4 |

| Quantum Espresso | 1 | 128.0 | 256.0 | 1024.0 | 4096 | 135133 | 60205.0 | 1.5 | 47 | 9 |

| MITgcm | 24 | 126.0 | 240.0 | 252.0 | 1152 | 45818 | 50695.4 | 1.3 | 16 | 4 |

| SENGA | 1 | 4096.0 | 5184.0 | 6480.0 | 8192 | 105 | 48307.6 | 1.2 | 4 | 3 |

| OpenSBLI | 128 | 64000.0 | 64000.0 | 64000.0 | 131072 | 23 | 36094.9 | 0.9 | 3 | 2 |

| GAMESS | 128 | 128.0 | 128.0 | 128.0 | 128 | 790 | 33533.8 | 0.8 | 10 | 1 |

| ONETEP | 4 | 360.0 | 360.0 | 360.0 | 1024 | 135 | 31710.2 | 0.8 | 5 | 1 |

| Hydro3D | 144 | 8000.0 | 8000.0 | 20800.0 | 20800 | 76 | 31366.2 | 0.8 | 4 | 2 |

| NEMO | 1 | 480.0 | 1344.0 | 5040.0 | 10080 | 966166 | 29359.6 | 0.7 | 24 | 4 |

| PeleLMeX | 128 | 2048.0 | 2048.0 | 4096.0 | 4096 | 99 | 18757.4 | 0.5 | 3 | 1 |

| ChemShell | 1 | 480.0 | 1024.0 | 12800.0 | 12800 | 967 | 16908.2 | 0.4 | 14 | 4 |

| NAMD | 8 | 288.0 | 288.0 | 512.0 | 576 | 4947 | 16801.2 | 0.4 | 5 | 3 |

| WRF | 64 | 384.0 | 384.0 | 384.0 | 384 | 97 | 12315.4 | 0.3 | 4 | 2 |

| NWChem | 1 | 384.0 | 576.0 | 672.0 | 768 | 1028 | 11473.6 | 0.3 | 6 | 2 |

| EPOCH | 128 | 1280.0 | 1280.0 | 4096.0 | 4096 | 577 | 10616.9 | 0.3 | 7 | 1 |

| HYDRA | 1 | 200.0 | 200.0 | 300.0 | 5120 | 159 | 9060.7 | 0.2 | 7 | 5 |

| Xcompact3d | 1 | 3840.0 | 5760.0 | 5760.0 | 16384 | 112 | 8542.0 | 0.2 | 7 | 3 |

| AxiSEM3D | 1024 | 12800.0 | 16000.0 | 16000.0 | 57600 | 119 | 8145.8 | 0.2 | 1 | 1 |

| Code_Saturne | 16 | 1024.0 | 1024.0 | 2048.0 | 8192 | 660 | 6474.4 | 0.2 | 6 | 4 |

| CRYSTAL | 16 | 64.0 | 128.0 | 128.0 | 1024 | 753 | 5806.5 | 0.1 | 7 | 3 |

| VAMPIRE | 128 | 512.0 | 1024.0 | 1024.0 | 2048 | 474 | 5623.1 | 0.1 | 5 | 2 |

| a.out | 1 | 768.0 | 4096.0 | 4096.0 | 4096 | 1597 | 4995.8 | 0.1 | 17 | 9 |

| 3DNS | 216 | 8000.0 | 13824.0 | 17576.0 | 17576 | 116 | 3865.6 | 0.1 | 1 | 1 |

| iIMB | 2304 | 2304.0 | 2304.0 | 2304.0 | 2304 | 48 | 3824.1 | 0.1 | 1 | 1 |

| GS2 | 112 | 2040.0 | 2352.0 | 11760.0 | 11760 | 205 | 3337.5 | 0.1 | 4 | 1 |

| ludwig | 8 | 512.0 | 512.0 | 512.0 | 512 | 829 | 3222.9 | 0.1 | 2 | 2 |

| TPLS | 2048 | 2048.0 | 2048.0 | 2048.0 | 2048 | 16 | 2654.9 | 0.1 | 2 | 1 |

| PDNS3D | 1024 | 1024.0 | 1024.0 | 1024.0 | 1024 | 34 | 2345.8 | 0.1 | 1 | 1 |

| SIESTA | 1 | 64.0 | 640.0 | 1600.0 | 2304 | 1103 | 1867.4 | 0.0 | 4 | 3 |

| Nek5000 | 1024 | 1024.0 | 1024.0 | 1024.0 | 2048 | 26 | 1705.2 | 0.0 | 2 | 1 |

| HemeLB | 4 | 1024.0 | 1024.0 | 1024.0 | 1280 | 16 | 1481.3 | 0.0 | 2 | 2 |

| Smilei | 8 | 128.0 | 128.0 | 128.0 | 256 | 28 | 1116.5 | 0.0 | 2 | 1 |

| RMT | 1280 | 1792.0 | 1792.0 | 1792.0 | 1792 | 51 | 821.6 | 0.0 | 2 | 1 |

| DL_MESO | 32 | 128.0 | 128.0 | 128.0 | 128 | 20 | 482.9 | 0.0 | 1 | 1 |

| FDS | 248 | 248.0 | 248.0 | 248.0 | 440 | 5 | 96.3 | 0.0 | 1 | 1 |

| SBLI | 64 | 512.0 | 512.0 | 512.0 | 512 | 19 | 93.4 | 0.0 | 2 | 2 |

| Arm Forge | 1 | 256.0 | 1280.0 | 1280.0 | 1280 | 1509 | 69.8 | 0.0 | 10 | 9 |

| ptau3d | 2 | 20.0 | 20.0 | 64.0 | 2560 | 90 | 63.0 | 0.0 | 3 | 1 |

| Amber | 8 | 64.0 | 64.0 | 64.0 | 64 | 6 | 36.0 | 0.0 | 2 | 2 |

| DL_POLY | 4 | 64.0 | 256.0 | 256.0 | 256 | 104 | 33.5 | 0.0 | 3 | 2 |

| OSIRIS | 512 | 512.0 | 512.0 | 512.0 | 512 | 19 | 3.4 | 0.0 | 1 | 1 |

| SU2 | 128 | 128.0 | 128.0 | 128.0 | 128 | 3 | 2.5 | 0.0 | 1 | 1 |

| UKRmol+ | 4 | 4.0 | 4.0 | 4.0 | 4 | 15 | 0.4 | 0.0 | 1 | 1 |

| PRECISE | 4 | 4.0 | 4.0 | 4.0 | 4 | 2 | 0.0 | 0.0 | 1 | 1 |

| GPAW | 1 | 1.0 | 1.0 | 1.0 | 1 | 1 | 0.0 | 0.0 | 1 | 1 |

| FVCOM | 1 | 1.0 | 1.0 | 1.0 | 1 | 3 | 0.0 | 0.0 | 1 | 1 |